AI is Watch Vidocq Onlinerocking the world of policing -- and the consequences are still unclear.

British police are poised to go live with a predictive artificial intelligence system that will help officers assess the risk of suspects re-offending.

SEE ALSO: Mark this as the moment when United trolling jumped the sharkIt's not Minority Report(yet) but certainly sounds scary. Just like the evil AIs in the movies, this tool has an acronym: HART, which stands for Harm Assessment Risk Tool, and it's going live in Durham after a long trial.

The system, which classifies suspects at a low, medium, or high risk of committing a future offence, was tested in 2013 using data that Durham police gathered from 2008 to 2012.

Its results are mixed.

Forecasts that a suspect was low risk turned out to be accurate 98 percent of the time, while forecasts that they were high risk were accurate 88 percent of the time.

That's because the tool was designed to be very, very cautious and is likely to assign someone as medium or high risk to avoid releasing suspects who may commit a crime.

According to Sheena Urwin, head of criminal justice at Durham Constabulary, during the testing HART didn't impact officers' decisions and, when live, it will "support officers' decision making" rather than define it.

Urwin also explained to the BBCthat suspects with no offending history would be less likely to be classed as high risk -- unless they were arrested for serious crimes.

Police could use HART to decide whether to keep a suspect in custody for more time, release them on bail before charge or whether to remand them in custody.

"The lack of transparency around this system is disturbing."

However, privacy and advocacy groups have expressed fears that the algorithm could replicate and amplify inherent biases around race, class, or gender.

"This can be hard to detect, particularly in self-learning systems, which carry greater risks," Jim Killock, Executive Director of Open Rights Group, told Mashable.

“While this process is reported to be 'advisory', there could be a tendency for officers to trust the machine on the assumption that it is neutral."

Whenever the data is systematically biased, outcomes can be discriminatory because learning models bring into the foreground assumptions that have been tacitly made by humans.

The Durham system includes data such as postcode and gender which go beyond a suspect's offending history.

Even though the system is very accurate, let's say 88 percent of the time, a "subset of the population can still have a much higher chance of being misclassified," Frederike Kaltheuner, policy officer for Privacy International, told Mashable.

For instance, if minorities are more likely to be put in the wrong basket, a system that is accurate on paper can still be racially biased.

"It's important to stress that accuracy and fairness are not necessarily the same thing," Kaltheuner said.

Last year, an investigation by U.S. news site ProPublicashone a light on the alleged racial bias of an algorithm used by law enforcement to forecast the likelihood of a repeated offense.

Among other things, the algorithm was making overly negative predictions about black versus white suspects. The firm behind the system denied the allegations.

For example, ProPublicareports the cases of Brisha Borden, a black 18-year-old teenager who stole a child’s bicycle and scooter, and Vernon Prater, a white 41-year-old who was picked up for shoplifting $86.35 worth of tools.

"Accuracy and fairness are not necessarily the same thing."

While Prater was a seasoned criminal, having already been convicted of armed robbery and attempted armed robbery and Borden had just a handful of misdemeanours, something odd happened when they were arrested and charged.

A computer algorithm predicting the likelihood of each committing a future crime gave Borden a 8 (high risk) while Prater received a low-risk score, just 3.

Two years later, exactly the opposite happened: Prater was serving an eight-year prison term for breaking into a warehouse and stealing thousands of dollars’ worth of electronics while Borden had not received any new charges.

HART authors, Durham police, and the University of Cambridge's centre for evidence-based policing, defended the system in a submission to a parliamentary inquiry.

"Simply residing in a given postcode has no direct impact on the result, but must instead be combined with all of the other predictors in thousands of different ways before a final forecasted conclusion is reached."

They also noted that the model is just "advisory."

However, advocacy groups believe there are questions that need to be assessed before the system is used to make life-changing decisions for individuals.

"The lack of transparency around this system is disturbing," Silkie Carlo, policy officer at Liberty, said.

“If the police want to maintain public trust as technology develops, they need to be up front about exactly how it works.

"With people’s rights and safety at stake, Durham Police must open up about exactly what data this AI is using.”

Topics Artificial Intelligence

E. L. Konigsburg and Museumgoing for Children

E. L. Konigsburg and Museumgoing for Children

Perfumed hand sanitizer is the worst, so let's stop using it

Perfumed hand sanitizer is the worst, so let's stop using it

William Carlos Williams’s “Election Day”

William Carlos Williams’s “Election Day”

Timothée Chalamet and Zendaya are watching you (and yes, it's a meme)

Timothée Chalamet and Zendaya are watching you (and yes, it's a meme)

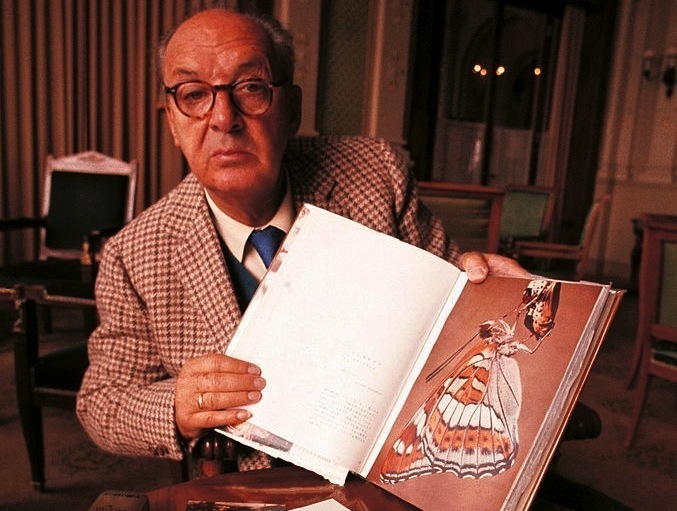

Remembering Nabokov as an American Writer

Remembering Nabokov as an American Writer

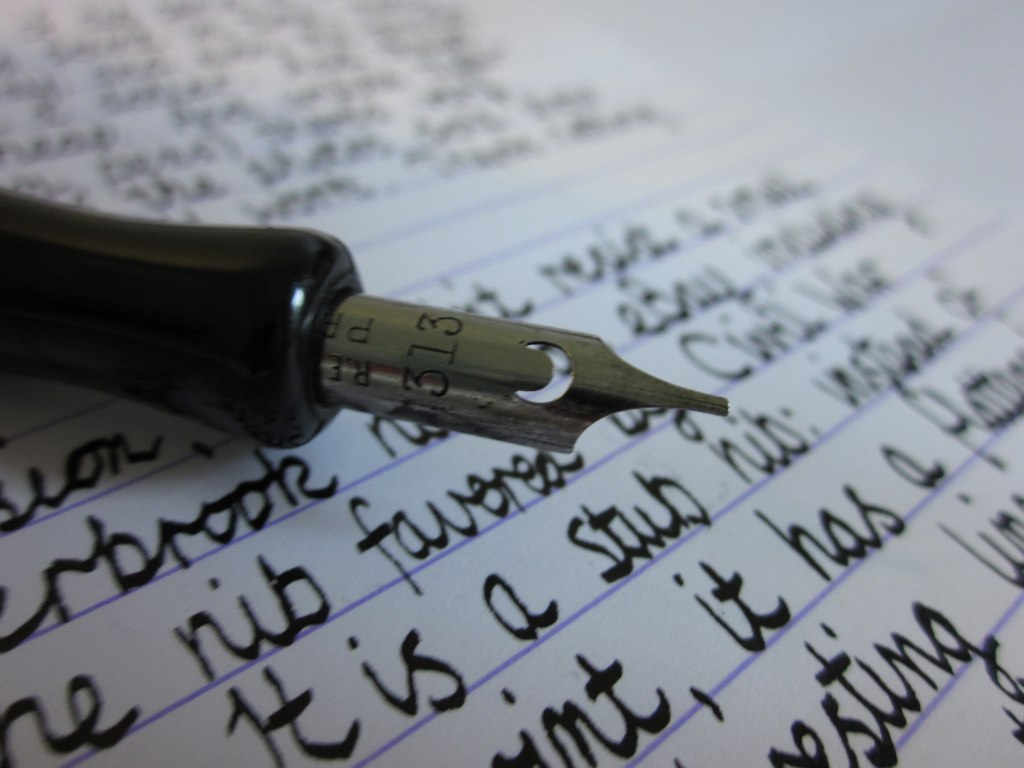

Shelby Foote on the Tools of the Trade

Shelby Foote on the Tools of the Trade

Maya Angelou, Denise Levertov, and Gary Snyder on Poetry

Maya Angelou, Denise Levertov, and Gary Snyder on Poetry

The Morning News Roundup for November 20, 2014

The Morning News Roundup for November 20, 2014

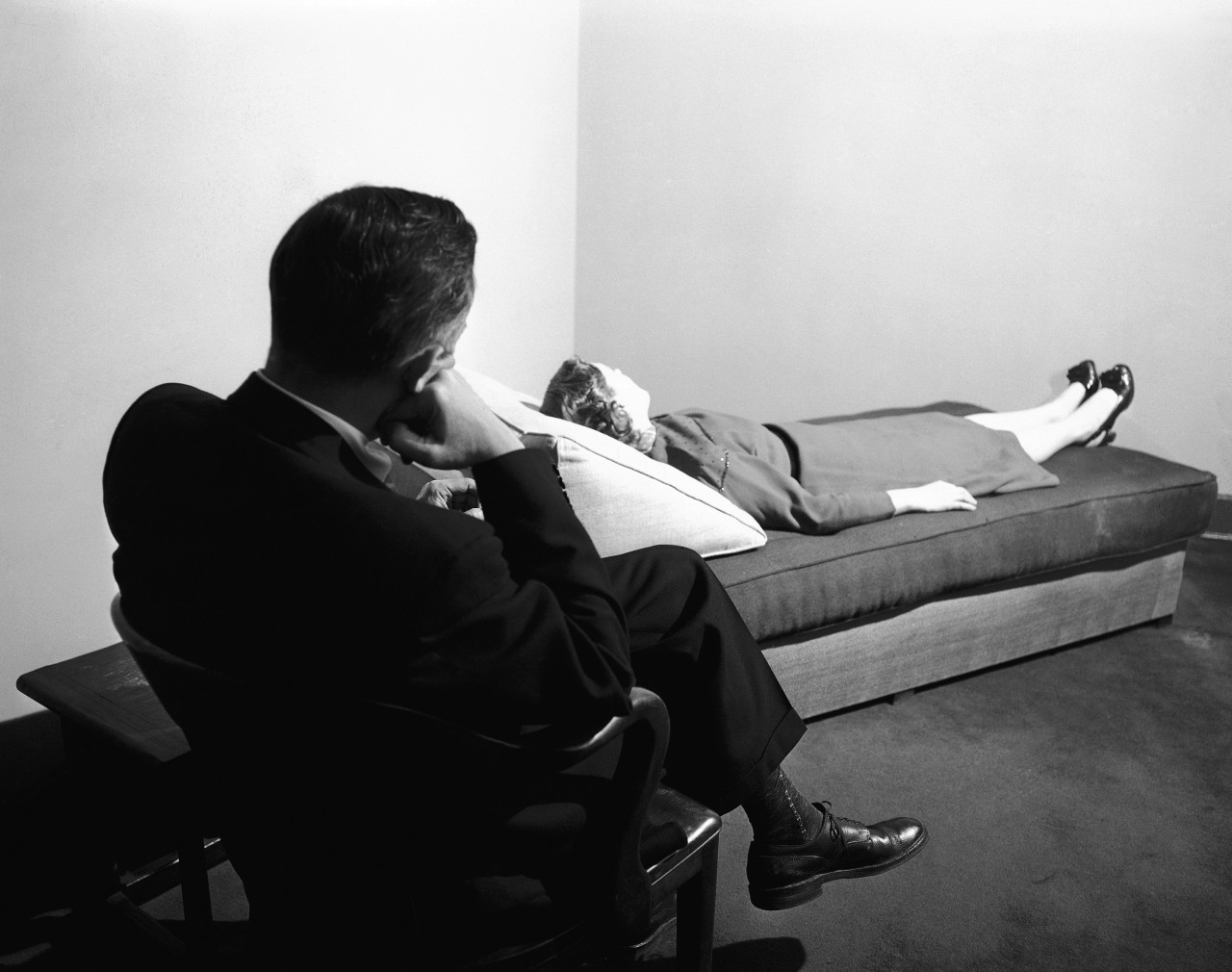

The Red Carpet: Last Bastion of Psychiatry

The Red Carpet: Last Bastion of Psychiatry

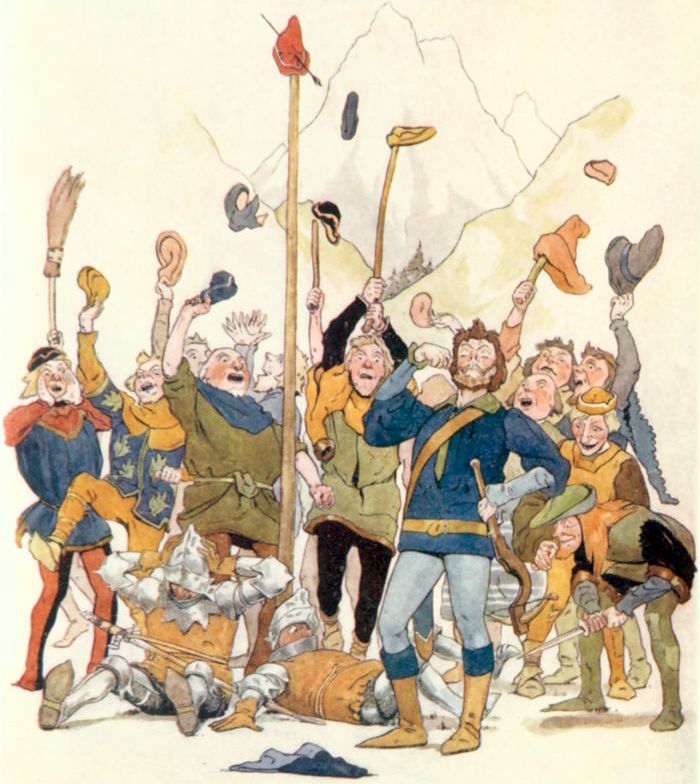

On This Day, William Tell Shot an Apple Off His Son’s Head

On This Day, William Tell Shot an Apple Off His Son’s Head

New on Our Masthead: Susannah Hunnewell and Adam Thirlwell

New on Our Masthead: Susannah Hunnewell and Adam Thirlwell

Can watching ethical porn help improve our sexual body image?

Can watching ethical porn help improve our sexual body image?

We need to talk about Jason Sudeikis' Twitter likes

We need to talk about Jason Sudeikis' Twitter likes

Why I Loved Wayne Newton’s “The Entertainer”

Why I Loved Wayne Newton’s “The Entertainer”

Three Millennia Later, Scholars Still Struggle with Sappho

Three Millennia Later, Scholars Still Struggle with Sappho

The Morning News Roundup for November 4, 2014

The Morning News Roundup for November 4, 2014

TikTok's nostalgia

TikTok's nostalgia

Gertie Turns One Hundred

Gertie Turns One Hundred

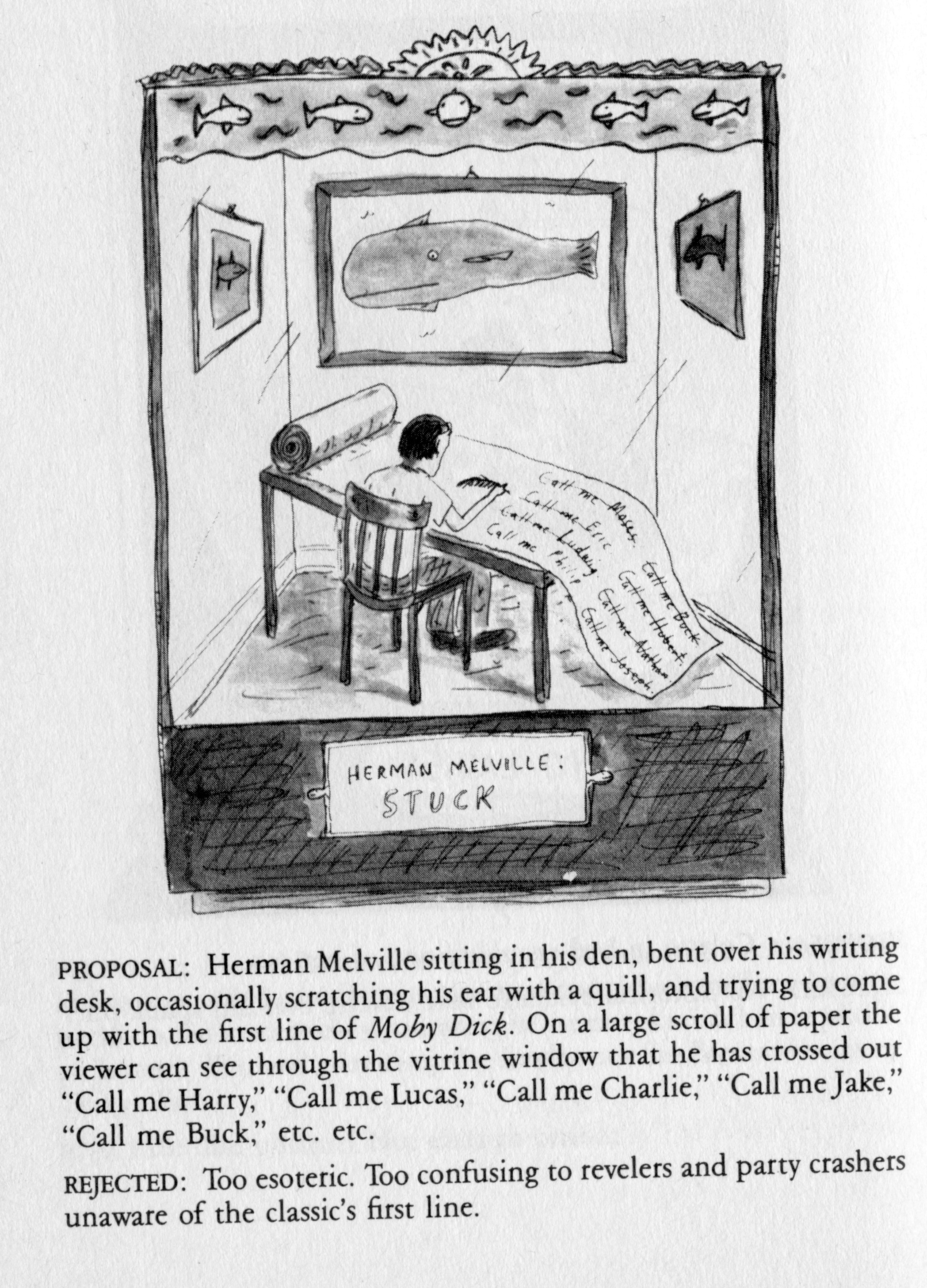

Roz Chast‘s Ideas for the Paris Review Revel, Circa 1985

Roz Chast‘s Ideas for the Paris Review Revel, Circa 1985

'Quordle' today: See each 'Quordle' answer and hints for September 2, 2023

'Quordle' today: See each 'Quordle' answer and hints for September 2, 2023

Sky Ferreira had creative control of her own unretouched 'Playboy' coverGoogle Assistant can be your interpreter, and it's just as cool as it soundsHillary Clinton's 'Between Two Ferns' interview is hilariously awkwardHackers leak copy of Michelle Obama's passport, but is it real?Samsung's foldable phone to arrive in the first half of 2019Marie Kondo memes imagine her as a bloodthirsty demon spiritGigi Hadid would like strange men to stop grabbing her bodyThe Facebook feature fueling the police brutality protests in AmericaBruno Mars points out a glaring inconsistency in the lyrics to one of his biggest hitsAmazon Echo Auto is finally hereIBM just unveiled a 'quantum computing system' for commercial useKit Harington was extremely over 'Game of Thrones' by the endImpossible Burger 2.0 taste test: Simulated meat gets an upgradeCritics slam M. Night Shyamalan's 'Glass'Big ass fatberg found in sewer of British seaside townCritics slam M. Night Shyamalan's 'Glass'AutoX's selfCartoonist compares gay rights advocates to Nazis, gets a powerful history lessonI tried to ignore Trump for a whole month. Here's what I learned.Tim Cook: Apple's biggest contribution to mankind will be about health Nissan becomes first global automaker to partner with Huawei on smart cockpit · TechNode How to watch 'Problemista' at home: When will the A24 film be streaming? Xiaomi reportedly boosts production of electric vehicles to meet demand · TechNode Wow, NASA spacecraft spotted lightning in Jupiter storm Wordle today: The answer and hints for June 21 BYD launches first model featuring Huawei’s assisted driving tech · TechNode NYT's The Mini crossword answers for June 21 Webb space telescope snaps pic of a very powerful, and unique, object NASA is finally talking about UFOs: 'This is a serious business' The iPhone App Store will get its first game streaming app this month How to watch 'Oppenheimer': Prime Video streaming deals Hearthstone earns over $140 million in 40 days after China return · TechNode Astronomers cast doubt on 'runaway black hole' discovery Bugatti's new $4 million Tourbillon has the wildest steering wheel ever NASA video shows astonishing view into Mars crater James Webb telescope just stared into the core of a fascinating galaxy Walmart+ Week TV deals: A few cheap 4K TVs, little more 103 ByteDance employees dismissed for corruption and other misconduct · TechNode 1Password adds recovery codes in case you get locked out of your account Georgia vs. Czech Republic 2024 livestream: Watch Euro 2024 for free

1.1133s , 8069.1484375 kb

Copyright © 2025 Powered by 【Watch Vidocq Online】,Pursuit Information Network